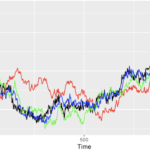

Monte Carlo simulations are a class of computational algorithms with the power to unlock solutions for problems that have a probabilistic interpretation. They are incredibly versatile and widely used in various fields, including finance, physics, engineering, and more. In this article, we’ll take a deep dive into Monte Carlo simulations, with a focus on their application in simulating stock price dynamics, particularly using the Geometric Brownian Motion model. A Brief History The Monte Carlo method takes its name from the Monte Carlo Casino in Monaco. This name was chosen as a code name for the method during the Manhattan Project, a top-secret research and development project during World War II. Scientists working on the project needed to simulate the behavior of neutrons in nuclear reactions, and they used randomness to tackle this problem. Monte Carlo Simulations: An Overview The central idea behind Monte Carlo simulations is to generate a vast number of sample paths or possible scenarios. These scenarios are often projected over a specific time horizon, which is divided into discrete time steps. This process of discretization is vital for approximating continuous-time phenomena, especially in domains like financial modeling, where the pricing of assets occurs in continuous time. Simulating Stock Price Dynamics with Geometric Brownian Motion One of the essential applications of Monte Carlo simulations in finance is simulating stock prices. Financial markets are notoriously unpredictable, and understanding potential price movements is crucial for various financial instruments, including options. The randomness in stock price movements is elegantly captured by stochastic differential equations (SDEs). Geometric Brownian Motion (GBM) Geometric Brownian Motion (GBM) is a fundamental model used to simulate stock price dynamics. It’s defined by a stochastic differential equation, and the primary components are as follows: The GBM model is ideal for stocks but not for bond prices, which often exhibit long-term reversion to their face value. The GBM Equation The GBM model can be represented by the following stochastic differential equation (SDE) in LaTeX: In this equation, μ is the drift, σ is the volatility, S is the stock price, dt is the small time increment, and dWt is the Brownian motion. Simulating Stock Prices To simulate stock prices using GBM, we employ a recursive formula that relies on standard normal random variables. The formula is as follows: Here, Zt is a standard normal random variable, and Δt is the time increment. This recursive approach is possible because the increments of Wt are independent and normally distributed. In the progression of this article, we conducted several essential steps in the context of financial simulations: Step 1: We acquired stock price data and computed simple returns. Step 2: Subsequently, we segregated the data into training and test sets. From the training set, we calculated the mean (drift or mu) and standard deviation (diffusion or sigma) of the returns. These coefficients proved vital for subsequent simulations. Step 3: Furthermore, we introduced key parameters: Monte Carlo simulations are grounded in a process known as discretization. This approach entails dividing the continuous pricing of financial assets into discrete intervals. Thus, it’s imperative to specify both the forecasting horizon and the number of time increments to align with this discretization. Step 4: Here, we embarked on defining the simulation function, a best practice for tackling such problems. Within this function, we established the time increment (dt) and the Brownian increments (dW). The matrix of increments, organized as num_simulations x steps, elucidates individual sample paths. Subsequently, we computed the Brownian paths (W) through cumulative summation (np.cumsum) over the rows. To form the matrix of time steps (time_steps), we employed np.linspace to generate evenly spaced values across the simulation’s time horizon. We then adjusted the shape of this array using np.broadcast_to. Ultimately, the closed-form formula was harnessed to compute the stock price at each time point. The initial value was subsequently inserted into the first position of each row. Variance Reduction Methods and Their Types Variance reduction methods are techniques employed in statistics and simulation to reduce the variability or spread of data points around their expected value. They are especially valuable in Monte Carlo simulations and other statistical analyses, where high variance can lead to imprecise results. These methods aim to improve the accuracy and efficiency of estimates by minimizing the variance of the outcomes. Here, we’ll explore what variance reduction methods are and delve into different types. What Are Variance Reduction Methods? Variance reduction methods are strategies used to enhance the accuracy and efficiency of statistical estimates. They are particularly important in situations where random sampling is involved, such as Monte Carlo simulations. The primary objective of these methods is to reduce the spread of sample outcomes around the expected value, thereby enabling more precise estimates with a smaller number of samples. Different Types of Variance Reduction Methods: In summary, variance reduction methods are critical for improving the accuracy and efficiency of statistical estimates, especially in scenarios involving randomness. They encompass a range of techniques, each with its unique approach to reducing variance and enhancing the precision of results. The choice of method depends on the specific problem and the underlying data distribution. Conclusion Monte Carlo simulations, particularly when coupled with the Geometric Brownian Motion model, are invaluable tools for simulating stock price dynamics and understanding the probabilistic nature of financial markets. By embracing the power of randomness and iterative calculations, financial analysts and modelers gain valuable insights into pricing derivatives, managing risk, and making informed investment decisions. These simulations enable us to explore the many possible scenarios that financial markets may offer, making them a fundamental technique in modern finance.